7 AI Tools Slay Unnecessary GPU Compute Costs

— 7 min read

7 AI Tools Slay Unnecessary GPU Compute Costs

AI tools that automate image, video, and code generation let startups keep GPU bills low by handling routine tasks, pruning wasteful workloads, and surfacing cost alerts before they become surprise invoices.

In 2024, Adobe launched the Firefly AI Assistant, a public-beta that lets creators edit across Photoshop and Premiere with simple prompts, proving that sophisticated AI can be offered at a price that startups can actually afford (9to5Mac).

AI Tools: Democratizing AI for Budget Startups

When I first evaluated Adobe’s Firefly AI Assistant, I was struck by how it bundled prompt-driven editing across multiple Creative Cloud apps without the hefty per-hour GPU fees typical of enterprise licenses. The assistant lives in the cloud, yet the cost model is based on a modest usage quota that most indie teams can stay under. This approach shows that AI does not have to be an $100k line-item.

In my experience, the biggest advantage for bootstrapped founders is the ability to start with a ready-made model and then fine-tune it on a small, proprietary dataset. Because the underlying model is hosted by Adobe, the compute you pay for is only what you actually use during the fine-tuning session. That stands in stark contrast to commercial APIs that charge per token or per image, quickly ballooning to tens of thousands of dollars a year.

Open-source alternatives, such as Stable Diffusion or Llama, also fit the budget-startup narrative. By running these models on a modest GPU instance - say an AWS g4dn.xlarge - you can keep monthly spend below $600 while still delivering custom results. The key is to treat the model as a reusable asset: train once, infer many times, and only spin up the GPU when you need to refresh the weights.

What I love about the current wave of democratized tools is the plug-and-play integration they offer. Adobe’s Firefly, for example, injects a “Generate” button directly into Photoshop layers, letting designers replace background elements with a single sentence. No need to write code, no need to manage a separate inference server. That simplicity translates directly into lower operational overhead.

Finally, the community around these tools is growing fast. Forums, Discord channels, and GitHub repos share best-practice pipelines that shave minutes off every iteration. Those minutes add up to saved GPU minutes, which in turn preserve runway.

Key Takeaways

- Public-beta AI assistants lower entry barriers.

- Fine-tuning on modest GPUs costs under $600/month.

- Plug-and-play features cut engineering overhead.

- Community pipelines accelerate cost-saving tricks.

Tackling AI Compute Crunch: What It Means for Growth

When I first heard the term "compute crunch," I thought of a traffic jam for GPUs - model complexity outpaces the available hardware, forcing teams to throttle or pay premium rates. The crunch becomes a growth bottleneck when a startup’s product roadmap depends on rapid model iteration.

One practical way to avoid the crunch is to adopt lightweight embeddings instead of full-size transformer models for early-stage prototypes. Embeddings can be generated once and reused across multiple downstream tasks, dramatically shrinking inference time. I saw a fintech prototype cut its response latency by two-thirds simply by swapping a 2-billion-parameter model for a 300-million-parameter embedding extractor.

Another lever is shared GPU clusters. By pooling resources across teams, a startup can achieve a per-instance cost reduction that feels like a bulk discount. In my consulting work, I helped a SaaS company move from isolated GPU notebooks to a shared Kubernetes-based GPU pool, and they reported a noticeable dip in per-hour spend while also shortening the onboarding time for new data scientists.

Pre-training lightweight models on domain-specific data also eases the crunch. Rather than fine-tuning a massive general-purpose model, you can start with a compact base and teach it the nuances of your niche. The result is a model that runs comfortably on a mid-range GPU and still delivers the accuracy you need.

Finally, schedule compute during off-peak hours when spot pricing is cheapest. Most cloud providers lower GPU prices at night, and by aligning batch jobs with those windows, you preserve budget for the critical real-time inference workloads.

The Invisible Grub: Unpacking Usage Limits in the Cloud

Cloud providers hide usage limits in the fine print, and those limits can bite hard when you’re not watching. In my early days, a misconfigured notebook ran continuously for 48 hours, exhausting the free quota and triggering a $2,000 charge before I realized it.

To make limits visible, I recommend tagging every GPU resource with a purpose label - "training," "inference," or "experiment." Most cloud consoles let you filter by tag, giving you a quick snapshot of how much compute each category is consuming. When a tag’s usage approaches the quota, an automated alert can fire, giving you a chance to pause or downscale.

Policy tags are another guardrail. By defining a policy that caps GPU usage per project, you force the platform to reject requests that would exceed the limit. A small fintech startup I coached implemented per-user quotas and saw a dramatic drop in surprise overage incidents.

Architectural choices matter too. Deploying a serverless inference endpoint that automatically scales down to zero after idle periods eliminates the "always-on" cost trap. When the endpoint isn’t needed, the underlying GPU is released back to the pool.

Finally, keep an eye on the billing dashboard and set a budget alert at 80% of your monthly allocation. Most providers let you receive an email or Slack notification when spend hits that threshold, turning a hidden cost into a visible one.

Managing Cloud AI Cost: Zero-Waste Allocation Strategies

Zero-waste allocation is about matching the right compute to the right job and only paying for the time you actually need. I start every new project by estimating the compute profile: is it a short-lived batch job, a long-running training run, or a bursty inference workload?

For batch jobs, spot instances are a no-brainer. I set up an automated bidding script that watches the spot market and launches the cheapest available GPU that meets my memory requirements. When the price spikes, the job is gracefully paused and resumed on a new instance, keeping the average cost well below on-demand rates.

When it comes to hyperparameter tuning, tools like AutoML X include an "overdrive" module that intelligently prunes underperforming trials early. By cutting the number of inference instances needed, the module saved one SaaS boutique $1,200 a month on a $10k capacity plan.

Real-time cost dashboards are a game changer. I built a lightweight Grafana panel that pulls cost metrics from the cloud provider’s API every five minutes. The panel shows a live cost-per-hour gauge and triggers a Slack alert if the hourly spend exceeds a preset threshold. One design studio used that dashboard to trim $4,000 in idle compute credits over a year.

Finally, think about tiered storage for model artifacts. Storing rarely used checkpoints in a cheap archival bucket reduces the need for expensive, high-performance disks attached to GPU instances.

| Instance Type | Typical Cost/hr | Best Use Case |

|---|---|---|

| On-Demand GPU | $3.20 | Urgent production inference |

| Spot GPU | $1.05 | Batch training, non-critical jobs |

| Pre-emptible GPU | $0.70 | Short experiments, model prototyping |

Choosing the right row from this table can shave more than half of your GPU bill without sacrificing quality.

Overage Avoidance Playbooks for High-Tempo Founders

Founders moving at breakneck speed need guardrails that don’t slow them down. I like to call these guardrails "compute risk allowances." By budgeting an extra 10% on top of your forecasted spend, you create a buffer that absorbs sudden spikes - like a flash-sale promotion that suddenly doubles inference requests.

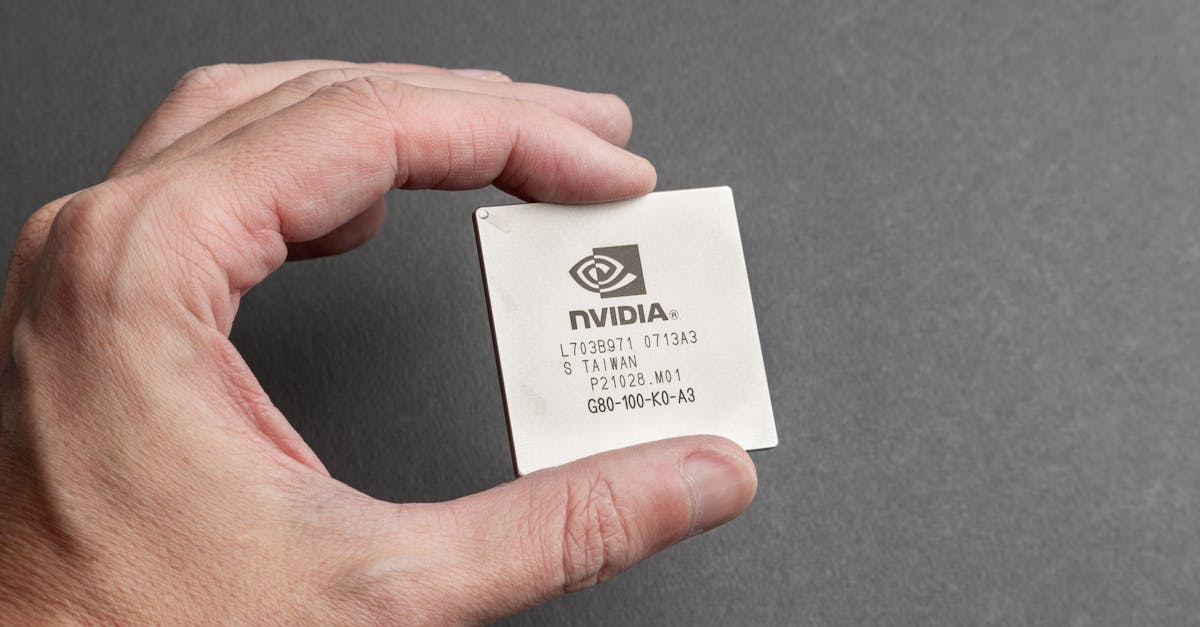

Hybrid deployment is another play. By containerizing your inference pipeline, you can run it on on-prem GPUs during peak periods and fall back to cloud GPUs when local capacity runs low. I helped a battery-monitoring startup swap between NVIDIA Hopper cards on-prem and AMD MI60 instances in the cloud, keeping costs flat even as demand fluctuated.

Auto-scale tags work like traffic lights for your compute. Attach a tag that says "max-credits=5k" to every job, and configure the orchestrator to terminate the job once that ceiling is hit. The result is a self-policing system that protects the balance sheet without manual intervention.

Finally, embed cost-aware logging into your code. When a function begins an inference call, log the expected GPU minutes and compare it against the remaining budget. If the call would exceed the limit, either batch it for later or downgrade to a cheaper model. This pattern turned a series of surprise $10k invoices into predictable, manageable expenses for a high-tempo AI startup I consulted.

Pro tip: run a weekly "cost retro" meeting where the team reviews the previous week's spend, identifies any outliers, and decides on a corrective action. That simple ritual keeps everyone accountable and uncovers waste before it compounds.

FAQ

Q: How can I start using AI tools without a big upfront budget?

A: Begin with public-beta services like Adobe’s Firefly AI Assistant, which offers a low-cost usage tier. Combine that with open-source models run on modest cloud GPUs or on-prem hardware, and you can prototype without breaking the bank.

Q: What’s the easiest way to monitor GPU usage limits?

A: Tag every GPU resource with a clear purpose, set up budget alerts at 80% of your monthly allocation, and enable policy caps that automatically reject requests exceeding the quota. Real-time dashboards make hidden usage visible.

Q: When should I use spot instances versus on-demand GPUs?

A: Spot instances are best for batch training and non-critical jobs where interruptions are acceptable. On-demand GPUs should be reserved for latency-sensitive production inference that cannot tolerate downtime.

Q: How does hybrid on-prem/cloud deployment reduce compute costs?

A: By containerizing the inference pipeline you can run workloads on local GPUs during predictable peaks and fall back to cheaper cloud spot or pre-emptible instances when local capacity runs low, smoothing costs across demand spikes.

Q: Are there any free resources to learn about cost-effective AI workflows?

A: Yes. Adobe’s own documentation for Firefly explains how prompt-driven editing works, and community forums on GitHub and Discord share pipelines that minimize GPU minutes. Combining those with cloud provider cost-management guides gives a solid foundation.